Q: Hi Michael. Welcome to the Big Unlock podcast. Very excited to have you here. The healthcare audience is super excited to listen to our podcast. I’m Rohit, the Managing Partner and CEO at BigRio and Damo Consulting. With that, I’d like to request you to introduce yourself, Michael.

Michael: Yeah, I’m excited to be here too. I’m Michael Marchant, Director for Digital Applications at Sutter Health. I’ve been here almost a year now, focusing on core interoperability, enterprise data integration, and also running our Community Connect program with about 250 practices.

I’ve been in interoperability for a long time. I’ve participated on the Epic Care Everywhere Governing Council, I’m on the Carequality Advisory Council, and I serve on the eHealth Exchange Board. So, interoperability and health technology are core to what I do every day—making decisions and planning for how we get people the data they need to make decisions around their healthcare. Excited to be here.

Q: That’s great. Michael, would you like to share your journey in healthcare? What got you started? What roles have you played, and what do you find interesting about this field?

Michael: It’s a long and interesting story. I went to college in Virginia at Old Dominion University, studying Human Resource Management. I started as an internal auditor. My father worked for a small software company called Comcare, and when I moved to California, they were looking for someone to install patient financial software—AP, GL, payroll, personnel.

I had some technical background but no real experience in software implementation. They were willing to train me, and I got on board. My first foray into interoperability was doing payroll installs—sending data from point A to point B to ensure employees got paid. That was my first critical experience with data exchange.

I moved from payroll into accounts payable and general ledger—again, lots of data movement and system frameworks. Comcare had a partnership with DataGate, which had an interface engine, and that’s where I started programming. That kicked off my career in health IT.

After having kids, Southern California became expensive, and my wife, despite her advanced degree, wanted to stay home with the kids. So, we moved to Sacramento, and I started with Sutter. I was part of the Y2K program with MedSeries4, working on integration across sites to ensure interfaces were Y2K-compliant—moving from two-digit to four-digit years and ensuring backward compatibility.

At Sutter, I moved up from analyst and project manager to director. Sutter Connect brought me over to run what we then called the Independent Practice Services program—it wasn’t yet Community Connect. We deployed GE’s product (GE had acquired IDX) to about 50 practices and 100 physicians before I moved on.

After that, I worked with the state contractor for the EHR Incentive Program. ACS Xerox partnered with California’s Department of Healthcare Services to build the state’s enrollment portal for incentive funds. I helped with training, enrollment, and deployment. By the end, we had paid out about $500 million in incentives.

Then I went to UC Davis, where I was Director of the Interop and Innovation Team. That’s when I got deeply involved in the industry—Carequality, HL7’s Interop Committee, and the blockchain task force. I still believe blockchain has potential, even if people aren’t quite comfortable with it yet.

I spent about 10 years at UC Davis and recently returned to Sutter, leading two teams. For me, technology is in the background, serving a purpose—automating, simplifying, accelerating. It’s about getting the right information to the right people so they can make the best decisions about care.

That’s what drives me: improving processes, delivering value to both internal and external stakeholders—our physicians, clinicians, and most importantly, the patients. This work creates better care, and I try to reinforce that with our teams. Even when you’re buried in the weeds of programming or writing specs, I try to help everyone see the forest through the trees.

Q: That’s awesome. And Michael, could you talk to us at a high level about Sutter as an organization—patients being served, locations, hospitals, physicians, and overall size?

Michael: For sure. Sutter Health is a large organization in Northern California. The Sutter footprint goes from about Sacramento, or a little north and east of Sacramento, down to Santa Barbara. We recently acquired the Sansum Clinics in the Santa Barbara area. So, from north of Sacramento to Santa Barbara, you’ll find Sutter entities and coverage.

We have around 25 to 27 hospitals, a number of ambulatory surgery centers, and we’re continuing to grow in the ASC space. We’re building a couple of cancer centers and a few other joint ventures. We really serve the population of Northern California—probably 2 to 3 million covered lives from a value-based care perspective, and hundreds of thousands of visits. We have a strong online presence as well as a physical presence in many Northern California communities, always aiming to deliver the best care.

Sutter has won a lot of awards—I don’t have my slide deck in front of me, but if I were doing a PowerPoint, I’d have the slide with all the awards.

Q: This high-level information is awesome, thank you. It’s good to know—it’s a pretty large footprint. With that, I’d like to segue into the first question. We were talking earlier about the CMS and ONC RFI that’s out right now and the TEFCA implementations you’ve seen to date. How do you think about this emerging process?

Michael: Yeah, I’ll start with TEFCA and then go to the RFI. The general idea from ONC, now under CMS, is to expand the capabilities of our national networks.

Historically, eHealth Exchange, CommonWell, and Carequality were the three national networks enabling organizations to do nationwide data exchange—mostly CCD documents—on a request-response basis, securing the connections and delivering data to the requesting organizations.

We’ve grown that network significantly. In a recent committee meeting, we learned that 1.1 billion documents were exchanged in April alone between participating organizations. The structure of those networks is treatment-based exchange—organizations treating patients and needing information to assess and understand the patient’s history to provide the best real-time care.

But we kind of stalled there. We were stuck at treatment-only. Many organizations that needed access to information couldn’t participate. During COVID, public health access became paramount, and CMS allowed some exceptions under emergency orders, which enabled that information exchange. But for broader, public health, and operational exchanges—such as care gap closures in value-based populations—those aren’t widely supported on treatment-based networks.

TEFCA’s promise is to expand this. Many healthcare ecosystem organizations—long-term care, SNFs, nursing homes, and community providers—weren’t eligible under the EHR Incentive Program and thus aren’t participants in the current networks. Roughly 30% of healthcare data holders aren’t participating in current treatment-based exchanges. TEFCA aims to bring them in and broaden the scope of who can participate.

It’s not just about treatment. The next phases involve operational exchange, payment exchange, public health, and research. The idea is to build a network that supports all of these use cases and welcomes organizations that couldn’t participate before—either because they’re not involved in treatment or they’re not HIPAA-covered entities.

CMS is taking the lead. There are now 8 to 10 QHINs (Qualified Health Information Networks). Most EHR vendors, Surescripts, eHealth Exchange, KONZA, and others are participating. The RFI seeks broad input on how to support this ecosystem: improving provider adoption and usability of digital tools, strengthening data exchange, ensuring access to comprehensive patient data, and advancing value-based care through technology.

It’s about identifying data needs, reducing administrative burdens, enabling patient and caregiver access to digital tools, managing identity, ensuring equity in digital health access, and setting future-proof certification criteria for participation.

I encourage everyone to comment and provide feedback to CMS based on their experiences—so these standards and frameworks are implemented correctly. That participation is vital.

Q: That’s awesome to know. Now, moving forward, Michael—on digital health tools, AI, and of course now GenAI and all the new LLMs coming into play.

Could you talk with us about the risk profiles and risk appetite for health systems, and share some examples you’ve seen at Sutter or at UC Davis?

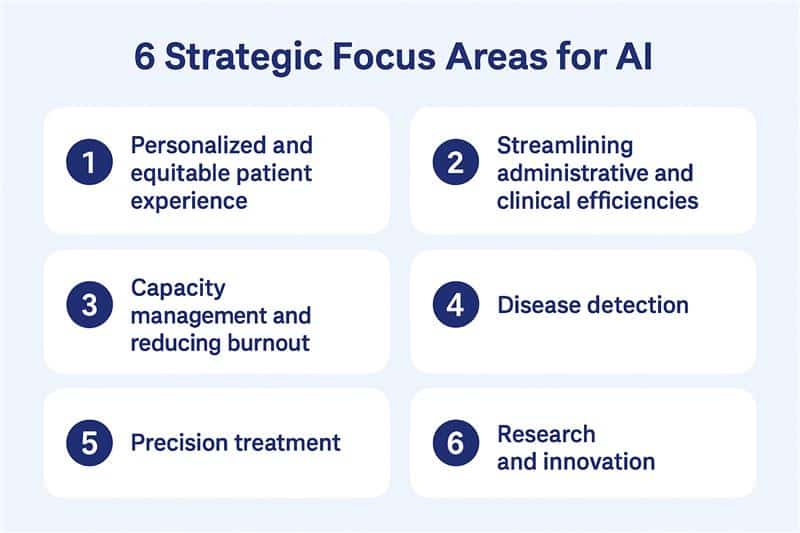

Michael: Yeah, for sure. AI is used for a large number of things—NLP, machine learning, RPA—those have been in place for a while, and we’ve been leveraging them. Now we’ve wrapped them under the umbrella of AI, but the buzz right now is around GenAI, especially ambient voice.

The idea is a physician in the room with a patient, having a conversation while an ambient agent listens in the background. It generates a note at the end of the encounter, allowing the physician to focus on the patient, not the computer. It makes the interaction more personal. The agent formats the note with relevant information while excluding casual conversation.

This technology has helped physicians get off the keyboard and return to practicing medicine. Satisfaction scores are high. It’s reduced pajama time—charting after hours—for those using it. We’re working to expand adoption across the board.

We’ve seen similar value in Epic. There’s an inbox agent that can read patient messages, prioritize them, and even draft responses. A physician managing a thousand patients receives a lot of messages. The AI agent helps flag urgent ones and draft thoughtful replies. In some cases, the AI-written replies are more empathetic than the rushed responses doctors might send. It’s all reviewed and approved by the physician, of course, but the assistive value is significant.

In radiology, AI helps prioritize breast imaging. It flags anything abnormal and puts it at the top of the radiologist’s worklist instead of first-in, first-out. That ensures patients with serious concerns are seen sooner. Given the shortage of radiologists, this makes a big difference.

We also implemented a tool for diabetic retinopathy screening. It takes images of the eye and scores them. An untrained person can operate the device, which helps us scale the service. Previously, there was a long wait for tests—now we can screen thousands of patients and prioritize those needing care.

So across the board, these tools are assistive. They don’t replace clinicians but help deliver faster, more targeted care.

Q: That’s great to know. So do you think, Michael, that at any point, any of this AI — in the examples you might be working on or seeing — is going to be autonomous?

Michael: I think there are probably some places where autonomous activity can occur in low risk — and that’s where you move to back office, right? A lot of the patient-facing care applications are going to be assistive or augmented rather than delivering the final result.

Things in the administrative area — like claims and claims status — a lot of that is logging into portals, sending messages, or even sending a fax. Those can be automated. Responses can be automated. You can OCR faxes and apply some RPA or ML rules to those transactions and execute commands. It happens in a repeatable, machine-like way.

But when you’re talking about a patient having a procedure or getting a test or result, the AI can help look at larger datasets. Say you have a patient with a specific condition. You can use an LLM to say, “I have Rohit, who has this condition. What are the outcomes for others with similar conditions? What medications did they take?” You help frame a care plan based on data from 100,000 patients instead of anecdotal information or outdated knowledge. So you’ll see more research-integrated LLM data that allows physicians to target patients with similar profiles — but it’ll still be assistive and suggestive, not making final decisions.

Q: That’s a good way to think of it. The key thing here is the risk factor. Lowest risk things you might automate; high risk, you lean towards assistance.

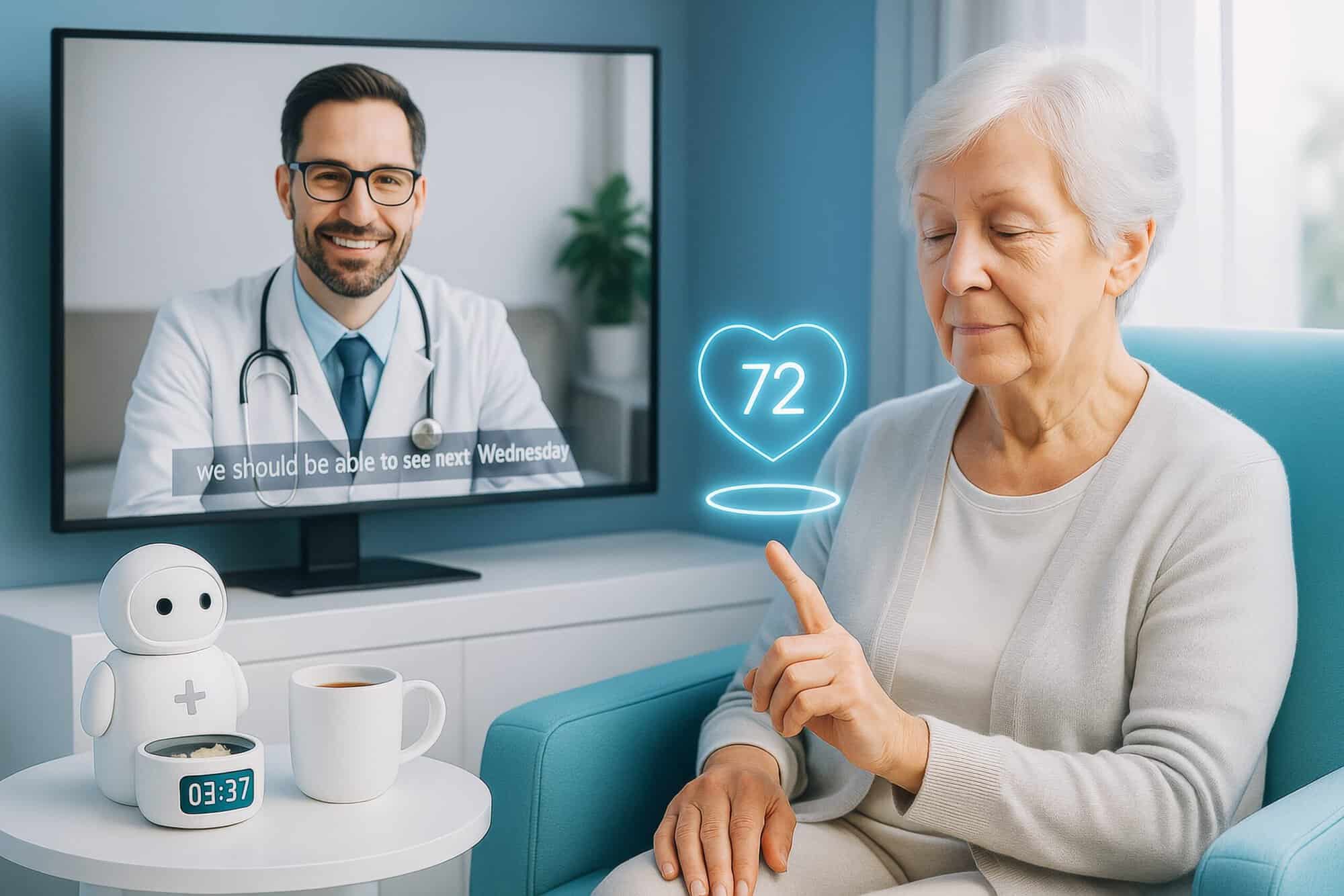

Michael: Yeah, I’ll give you an easy example here. I’m in IT, so I’m participating in a pilot with a blood pressure device.

What happens is I take my blood pressure, and the reading goes to Epic. Part of the AI in the background looks at the readings, and if mine is not what it should be, it sends me a text saying, “Hey, your reading was out of band. You might want to take a sip of water, relax for a second, and retake your blood pressure in 15 minutes.”

All of that is low risk and automated. Nobody has to review the score. It knows it’s out of band, and it recognizes there might be some environmental factors impacting the reading. So it automatically sends me a suggestion: “Hey, your blood pressure was high. Could you take another reading in 15 minutes after calming down or making sure nothing was off?”

That sort of automation is low risk. It reviews the data — so there’s some oversight — and it actually gives the patient comfort. It makes you think, “Oh wow, someone’s paying attention,” and that kind of outreach builds confidence. It’s a patient satisfier. It improves safety. There are a lot of benefits — and again, it’s low risk.

You know, I’ve done it in the office — taken a reading, it was a little high, and they’d say, “Okay, let’s take it again.” Two minutes later, it’s normal. So this is similar. And when you think about caring for a larger set of patients, we’re going to distribute that blood pressure cuff to thousands of people. We don’t need a ton of nurses looking at every result. We just need the rules in place: if something’s out of band, ask for another reading. If it’s out again, then alert a clinician.

Then, that physician or clinician might say, “Hey, you’ve had a couple high readings in a row. We may need to adjust your medication. We might need to bring you in. Or, this looks serious — please go to the ER.”

That’s where you apply the right resources in the right way. AI becomes a tool to help prioritize risk. For patients who are higher risk — and where there’s information you can act on quickly — a person can step in rather than relying solely on tech.

As we get more advanced, we’ll expand this kind of approach into other use cases beyond blood pressure to truly provide personalized medicine. Back when Bush talked about personalized medicine, it was tied to genetics and DNA. But I think personalized medicine is really about treating each patient as an individual and giving them feedback and support to manage their condition proactively — before there’s an adverse health event.

That’s what value-based care is about. We want to keep people out of the hospital and care for them before they get sick. These low-risk AI tools make a lot of sense for that. We just need to figure out a reimbursement and incentive model that encourages both patients and providers to adopt them — instead of waiting until people get sick and need hospital care, which, of course, is expensive for everyone.

Q: Of course. So Michael, moving on to another aspect of innovation—thinking about it in the framework of new announcements. Oracle has announced a completely new redesign of the EMR system, and we all know Epic is the big, heavily used system by health plans and health systems. How do you think about innovation in this scenario?

Michael: One of the things about Epic—and again, Epic is a significant vendor in the electronic health record space—they’re a market leader. They deliver great technology. I’ve been a customer of Epic since 1998, so I’m very familiar with them and their architecture.

I think one of the key aspects of modernizing electronic health records is abstracting the data, workflow, and technology. Epic, like most of the historical EHRs, was built first as a billing system, and then as a clinical documentation system. A lot of the workflows inside current EHRs, including Epic, are really tied to documenting the right things so that when we send the claim to the payer, it’s approved—not denied—because we’ve checked all the right boxes or coded it correctly.

Looking at Oracle’s new ambitions—they’re focused on an AI-native, cloud-native, redesigned user experience that really puts clinical workflow first. Instead of thinking about billing first, they’re thinking about the clinician experience first, and then reverse-engineering it to handle administrative tasks using AI and other tools.

Whether it’s Epic, Meditech, Cerner, Oracle, Allscripts, Greenway, Athena—you name it—the future is about enabling the right workflow for the right specialty and clinician. There should be personalization that allows them to work in a way that makes sense to them, while still giving access to all the necessary data.

If we can create data liquidity—which is what they’re aiming for with FHIR APIs and API-enablement on the back end—then we’re no longer chained to Epic or Oracle’s UI. If we can access the underlying data structures, we can build apps on top of that to create more fluid, user-based workflows.

We’ve gone through different UI development phases—widgets, phone apps, watch apps—and the future will be about building UIs that are intuitive for clinicians. They should meet clinical needs first, and then use AI and automation to handle the back end—like billing, compliance, and documentation. That way, we’re not robbing innovation on the front end just to serve billing on the back end.

You’ll prompt clinicians when needed—like, “Did you ask about smoking status?”—instead of forcing them to check endless boxes. Whether it’s Oracle or a smart startup, someone is going to leverage existing EHRs—like Epic’s infrastructure and data—but create a much better UI and clinical experience on top.

Replacing an EHR is incredibly expensive. So instead of ripping and replacing Epic, maybe you introduce a SMART-on-FHIR application for cardiology that works better than what Epic has. You get some market traction, then build apps for other specialties.

You’re not going best-of-breed across everything—just best-of-breed on UI—while still using your existing EHR’s infrastructure and data model to drive the new experience.

Q: That’s a great perspective on innovation, and I really like the term you used—data liquidity. It’s an awesome way to think about it. As we come toward the end of our podcast, Michael, I wanted to touch on one more topic—the California Data Exchange Framework, which you mentioned earlier. Tell us more about that and where you see it going.

Michael: Yeah, so California—like every state—thinks they’re unique. Governor Newsom signed the Data Exchange Framework legislation a couple of years ago. It’s essentially establishing a parallel data exchange within the state that allows a person living in California to access their longitudinal health record, including social services data and public health data.

They’ve created a TEFCA-like model with QHIOs—Qualified Health Information Organizations—that help facilitate participation. There are requirements around admit notifications, panel management, direct messaging, and data exchange for treatment and public health operations.

Right now, the Data Exchange Framework is a legal framework, but not a technology framework. They’re trying to be technology-agnostic and allow organizations to participate using whatever tools they currently have.

One challenge is the need for point-to-point connections. If I want to exchange data with, say, a housing agency or public health department in Sacramento, I have to figure that out directly with them. But the legal framework provides cover—a data use agreement that says the state has authorized the exchange, so we don’t need separate agreements.

Many groups involved—like housing or public health—haven’t historically participated in data exchange. So the questions are: What data can public health have? What can a housing agency or non-covered entity access? How do we ensure the right security profile? Who’s authorized to participate?

Today, to find out who’s signed the Data Exchange Framework, you have to visit a website and look them up on an Excel spreadsheet. That’s obviously not scalable. The future needs to include a real technical infrastructure to support this exchange—not just point-to-point connections, but a true network effect.

It’s similar to TEFCA but running in parallel. I’m working with BluePath Health, EMI Advisors, and others on this in California. I serve on the Technical Advisory Committee led by CDII to provide feedback and help define the standards and frameworks to enable this exchange.

It’s an exciting initiative. SB 660 is moving through committee and will help enforce participation—not just in signing agreements, but in actually exchanging data. That’ll give the framework some real teeth.

Of course, there are challenges—AB 352, for instance, which prevents abortion-related data from leaving the state. So there are lots of complexities. But California is serious about interoperability, governance, and regulation, and we’re all working to comply. Hopefully, this framework will help us get there.

Q: That’s awesome, Michael. So, any other parting thoughts for the listeners of the podcast from your side before we wrap up?

Michael: There’s a lot happening, and it can feel overwhelming. What I always tell people—like I tell my kids going off to college—is: get involved. Even if it’s something small, participate. Find people who are involved, ask questions, learn from them.

Many of the things I’ve learned came from conversations with really smart people. I participate in the Health Tech Talk Show with Lisa Bari and Kat McDavitt—they’re incredibly knowledgeable. Lisa was the former CEO at Civitas and now works at Innovaccer. Brendan Keeler also shares great content on LinkedIn and through his blog.

Join a committee. Help make sure your organization is ready. If you’re in the interoperability or health tech space and you’re not moving forward, you’re going to get left behind.

Engage, understand, and provide feedback—especially to things like RFIs. If you haven’t read them, plug them into your AI, get a summary, and start there. Use the tools we have.

And I always tell people: I’m an open book. If you have questions, feel free to connect with me on LinkedIn—we can have a great conversation. Thanks again for having me on the podcast, Rohit. Exciting times ahead for all of us.

————————

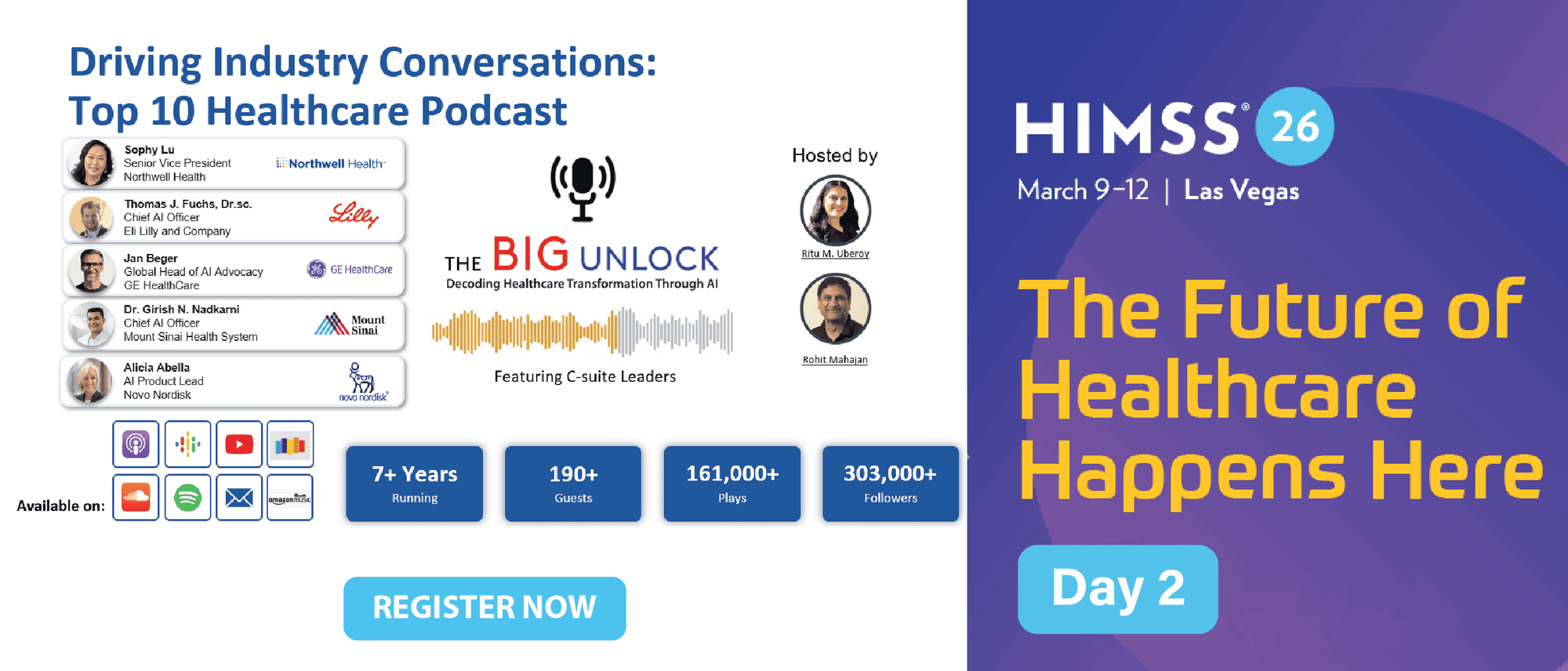

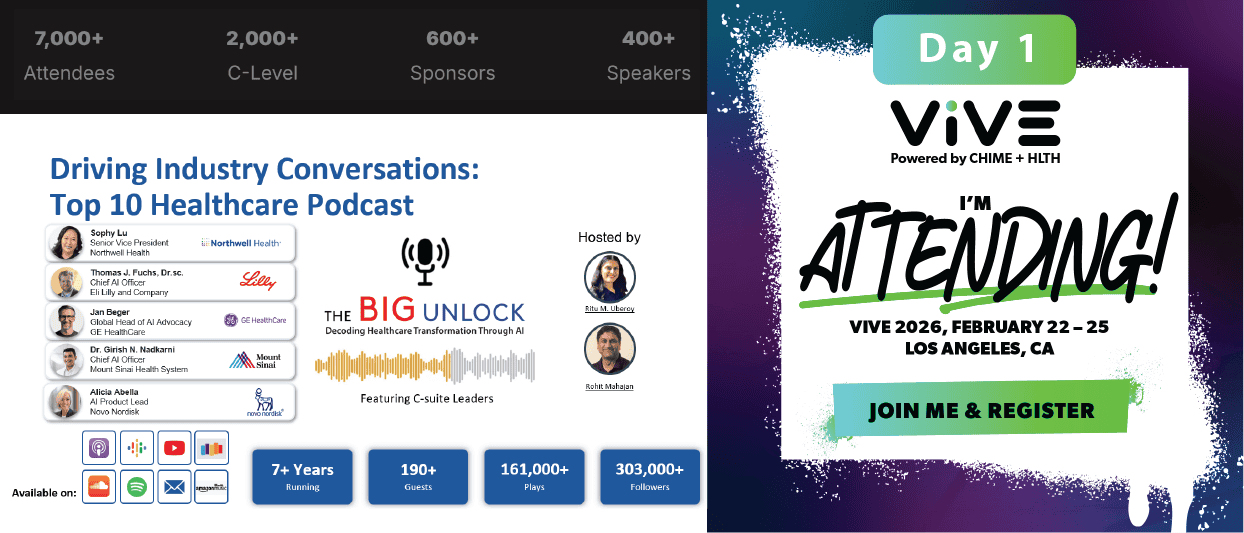

Subscribe to our podcast series at www.thebigunlock.com and write us at [email protected]

Disclaimer: This Q&A has been derived from the podcast transcript and has been edited for readability and clarity.